Best AI Podcast Transcription Tools 2026: Voxtral vs Fish Audio vs BibiGPT Compared

Compare the best AI podcast transcription tools in 2026: Mistral Voxtral Transcribe 2, Fish Audio STT, BibiGPT, Castmagic, and more — accuracy, pricing, and Chinese support reviewed.

Best AI Podcast Transcription Tools 2026: Voxtral vs Fish Audio vs BibiGPT Compared

The best AI podcast transcription tools in 2026 are BibiGPT (best for Chinese podcasts), Mistral Voxtral Transcribe 2 (best price-performance for English), and Fish Audio STT (best for multi-speaker emotion tagging). Each tool solves a different pain point: one-click multilingual transcription, ultra-low-cost bulk processing, or speaker-aware emotion annotations.

According to Mistral AI, Voxtral Transcribe 2 achieves approximately 4% word error rate on FLEURS benchmarks at $0.003/minute — 80% cheaper than ElevenLabs Scribe v2 and 3x faster. Fish Audio STT launched in March 2026 with automatic emotion and paralanguage tagging. Meanwhile, BibiGPT's support for 30+ platforms and deep Chinese audio optimization keeps it the go-to choice for Chinese podcast creators.

AI Subtitle Extraction Preview

Let's build GPT: from scratch, in code, spelled out

Andrej Karpathy walks through building a tiny GPT in PyTorch — tokenizer, attention, transformer block, training loop.

Want to summarize your own videos?

BibiGPT supports YouTube, Bilibili, TikTok and 30+ platforms with one-click AI summaries

Try BibiGPT Free2026 AI Podcast Transcription Tools at a Glance

| Tool | Word Error Rate | Pricing | Chinese Support | Speaker Separation | Best For |

|---|---|---|---|---|---|

| BibiGPT | Excellent (dual-engine) | Subscription incl. | ⭐⭐⭐⭐⭐ | ✓ | Chinese podcasts, all-in-one workflow |

| Voxtral Transcribe 2 | ~4% WER | $0.003/min | 13 languages incl. Chinese | ✓ | Bulk English transcription, budget-focused |

| Fish Audio STT | Excellent | Low-cost API | ✓ | ✓ | Interview podcasts, emotion context |

| Castmagic | Excellent | $39+/mo | English-first | ✓ | Show notes & content repurposing |

| Cleanvoice AI | Good | $0.015/min | Limited | Limited | Noise removal, audio cleanup |

| ElevenLabs Scribe v2 | ~5% WER | $0.015/min | ✓ | ✓ | High accuracy, premium users |

Mistral Voxtral Transcribe 2: The 2026 Price-Performance Champion

Voxtral Transcribe 2 is the most talked-about transcription release of 2026. According to VentureBeat:

- Accuracy: ~4% WER on FLEURS, outperforming GPT-4o mini Transcribe and Gemini 2.5 Flash

- Price: $0.003/minute — 80% cheaper than ElevenLabs Scribe v2

- Speed: ~3x faster processing than ElevenLabs Scribe v2

- Features: Speaker diarization, word-level timestamps, context biasing, 13 languages

- Deployment: Fully open source, can run on-device for privacy-sensitive use cases

For English-primary podcasts needing large-scale, budget-friendly transcription, Voxtral Transcribe 2 is the clear leader in 2026.

Fish Audio STT: The New Model That Understands Emotion

Fish Audio STT launched in March 2026 with a unique angle:

- Automatic emotion tagging: Detects and annotates speaker emotions (excitement, contemplation, pauses) in the transcript

- Paragraph-level timestamps: Word-precise time codes for video editing and subtitle sync

- 3 export formats: SRT, VTT, TXT — covers all major editing tool workflows

- Multi-speaker labeling: Automatically separates and labels different speakers

For interview and multi-guest podcasts, Fish Audio STT's emotion annotations give transcripts a conversational feel that plain text often lacks.

BibiGPT: The Complete Solution for Chinese Podcasts

If your podcast is in Chinese, or you need more than transcription — summaries, chapter navigation, Q&A, and note export — BibiGPT delivers an all-in-one workflow no other tool matches.

Why Chinese podcasters choose BibiGPT:

- Platform support: Xiaoyuzhou, Ximalaya, Apple Podcasts, YouTube podcasts, Bilibili, and 30+ platforms — just paste the link

- Dual transcription engines: Switch freely between OpenAI Whisper and ElevenLabs Scribe for the right speed-accuracy tradeoff

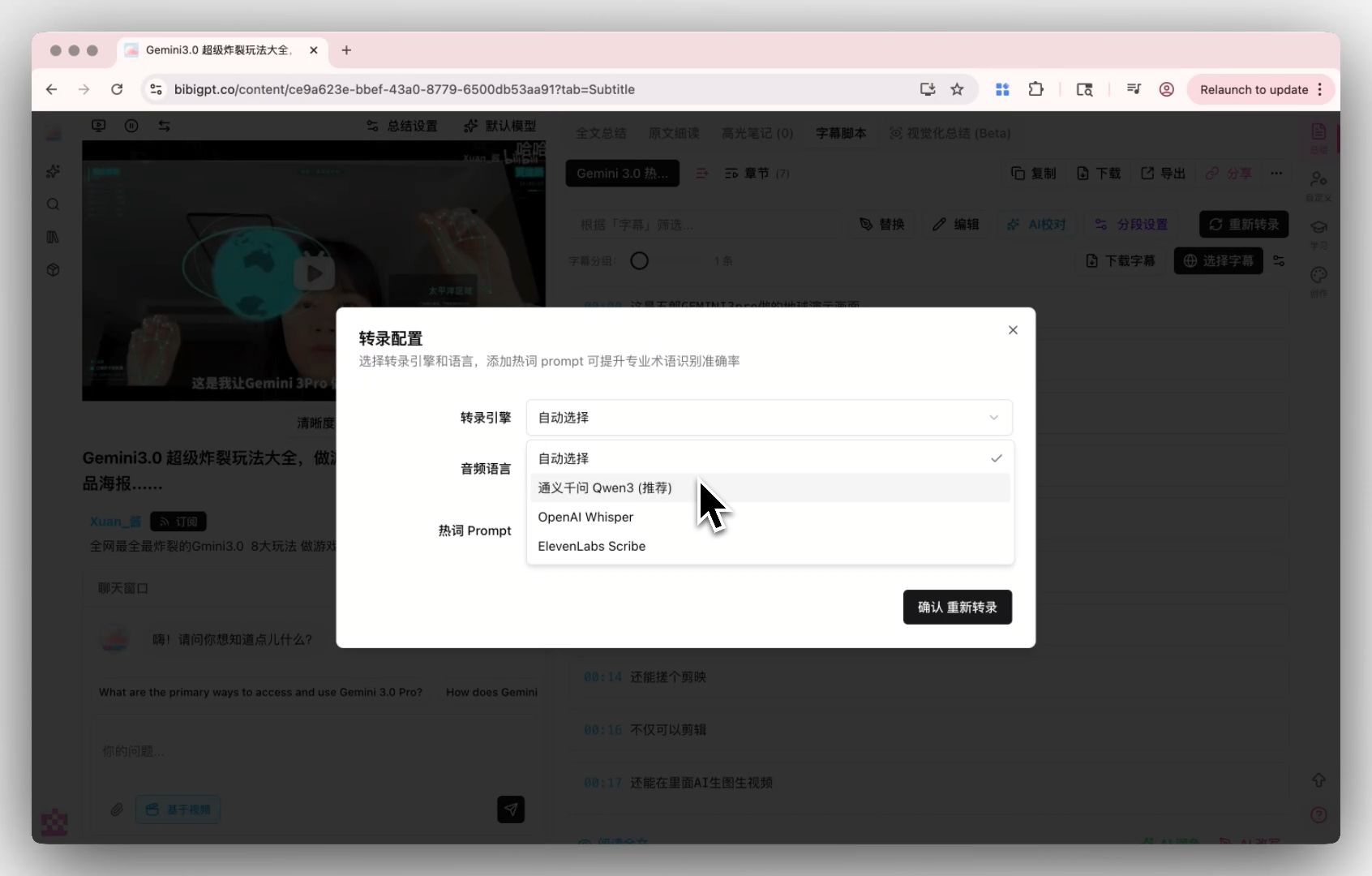

BibiGPT Custom Transcription Engine Configuration

BibiGPT Custom Transcription Engine Configuration

- Beyond transcription: Generate structured summaries, mind maps, AI Q&A, and flashcards on top of the transcript

- Note export: Export to Notion, Obsidian, Readwise — build a searchable podcast knowledge base

- Trusted by 1M+ users: Over 1 million users across 30+ platforms

Explore BibiGPT's AI podcast summary feature and podcast transcript generator.

Castmagic: Best for Post-Transcription Content Repurposing

If your main goal after transcription is generating Show Notes, social media copy, and email newsletters, Castmagic is purpose-built for that workflow:

- Auto-generates Podcast Show Notes (with chapter titles and keywords)

- One-click Twitter/LinkedIn posts, email summaries

- Multi-language support (English-primary)

- From $39/month

Castmagic's weakness is limited Chinese support and a focus on English-first content creators.

How to Choose the Right AI Podcast Transcription Tool

Chinese podcasts / Multi-platform aggregation → BibiGPT 30+ platform support, transcription + summary + Q&A in one place — the most complete Chinese ecosystem. Try free audio transcription online.

English podcasts / Low-cost bulk transcription → Voxtral Transcribe 2 $0.003/minute, best accuracy-per-dollar, open-source for self-hosting.

Interview / multi-speaker podcasts → Fish Audio STT Emotion tagging and speaker separation make transcripts more readable and human.

Content repurposing / English creators → Castmagic Automates the full content marketing workflow after transcription.

You can also combine tools: use Voxtral for cost-efficient bulk transcription, then bring the text into BibiGPT for AI-powered summarization and note-taking. See also: AI Podcast Summary Workflow Guide and Best AI Podcast Summarizer Tools 2026.

FAQ

Q: Which AI podcast transcription tool is most accurate in 2026? A: Voxtral Transcribe 2 achieves the best accuracy-to-cost ratio at ~4% WER for $0.003/min. ElevenLabs Scribe v2 is slightly more accurate (~5% WER) but costs 5x more. For Chinese audio, BibiGPT's transcription engine is optimized for Chinese phonemes and context.

Q: Is there a free AI podcast transcription tool? A: BibiGPT offers a free tier including basic transcription and AI summary — no credit card required. Voxtral Transcribe 2's open-source weights are free to self-host if you have technical resources.

Q: Does Voxtral Transcribe 2 support Chinese? A: Yes, it supports 13 languages including Mandarin. However, for Chinese podcasts with regional accents, slang, or platform-specific terminology, BibiGPT's specialized Chinese training gives it an edge.

Q: Can AI podcast transcription tools generate subtitle files? A: Yes. BibiGPT exports SRT/VTT subtitles, Fish Audio STT supports SRT and VTT export, and Voxtral's API allows custom output format configuration.

Start your AI efficient learning journey now:

- 🌐 Official Website: https://aitodo.co

- 📱 Mobile Download: https://aitodo.co/app

- 💻 Desktop Download: https://aitodo.co/download/desktop

- ✨ Learn More Features: https://aitodo.co/features

BibiGPT Team