Best AI Video Summarizer 2026: ChatGPT vs Claude vs Gemini Multi-Model Comparison

The best AI video summarizer in 2026 lets you switch between ChatGPT, Claude, and Gemini. Compare multi-model strengths for video understanding, long-document analysis, and creative output. See why BibiGPT is the only tool that lets you choose your AI brain.

Best AI Video Summarizer 2026: ChatGPT vs Claude vs Gemini Multi-Model Comparison

Table of Contents

- Why You Need a Multi-Model AI Video Summarizer in 2026

- 2026 Top 5 AI Video Summary Tools: Quick Ranking

- Why Multi-Model Switching Matters in 2026

- ChatGPT vs Claude vs Gemini: Strengths Compared

- BibiGPT Multi-Model Features: A Deep Dive

- Step-by-Step: How to Switch Models in BibiGPT

- FAQ

- Conclusion

Why You Need a Multi-Model AI Video Summarizer in 2026

In 2026, no single AI model is the best at everything. Gemini leads in video visual understanding. Claude excels at long-document analysis and natural prose. ChatGPT shines in creative multi-modal tasks. If you are locked into one model, you are leaving performance on the table every single day.

BibiGPT is the only commercial AI video assistant that lets you switch between multiple LLMs on demand. With 1M+ active users, over 5M+ AI summaries generated, and support for 30+ platforms, it is purpose-built for the multi-model era.

Try pasting your video link

Supports YouTube, Bilibili, TikTok, Xiaohongshu and 30+ platforms

2026 Top 5 AI Video Summary Tools: Quick Ranking

| Rank | Tool | Key Strength | Multi-Model |

|---|---|---|---|

| 1 | BibiGPT | 30+ platforms, multi-LLM switching, visual analysis, mind maps | ✅ |

| 2 | NoteGPT | YouTube note-taking | ❌ |

| 3 | Eightify | YouTube 8-point summaries | ❌ |

| 4 | ScreenApp | Screen recording + AI summary | ❌ |

| 5 | NotebookLM | Document chat and audio generation | ❌ |

The key difference: Every competitor above locks you into a single AI engine. BibiGPT is the only video AI assistant that lets you choose your brain. For a detailed NotebookLM vs BibiGPT breakdown, see our NotebookLM 2026 comparison review.

Why Multi-Model Switching Matters in 2026

You have probably noticed this yourself: the same AI tool delivers wildly different quality depending on the video type. A 90-minute finance lecture needs deep logical analysis. A travel vlog needs scene-by-scene visual understanding. A marketing reel needs punchy creative copy.

This is not a tool problem. It is a model problem.

The three dominant LLMs of 2026 each have distinct strengths:

- Gemini excels at understanding video frames — identifying people, scenes, objects, and actions in visual content analysis workflows

- Claude produces the most structured and naturally flowing long-form analysis, making it ideal for lecture and podcast breakdowns

- ChatGPT leads in creative multi-modal generation — from social media copy to cross-format content remixing

For anyone who depends on video for learning or content creation, multi-model switching is not a luxury. It is the single biggest efficiency unlock available in 2026 AI video summarizers. If you work heavily with podcasts, our Best AI Podcast Summarizer Tools 2026 guide covers model selection for audio-first content.

ChatGPT vs Claude vs Gemini: Strengths Compared

| Capability | Gemini | Claude | ChatGPT |

|---|---|---|---|

| Video visual understanding | ⭐⭐⭐⭐⭐ | ⭐⭐⭐ | ⭐⭐⭐⭐ |

| Long subtitle/document analysis | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Structured summarization | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Creative copy generation | ⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ |

| Multilingual capability | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

| Logical reasoning | ⭐⭐⭐⭐ | ⭐⭐⭐⭐⭐ | ⭐⭐⭐⭐ |

Bottom line: There is no all-round champion — only scenario champions. The type of video you process determines which model is optimal, and BibiGPT lets you pick within a single interface.

Want to see how AI understands the visual content inside videos? Check out the visual content analysis feature.

BibiGPT Multi-Model Features: A Deep Dive

BibiGPT was built on a simple insight: different AI engines are best at different things, so users should pick the right brain for each task.

Why BibiGPT Is the Only Multi-Model Video Assistant

NoteGPT, Eightify, ScreenApp, Glarity, and NotebookLM all lock you into a single AI model. No matter what you feed them, they run the same engine under the hood. BibiGPT breaks that constraint:

- One-click switching: Select a different LLM directly on the summary interface

- Task-matched models: Finance analysis with Claude, travel vlogs with Gemini, marketing content with ChatGPT

- Side-by-side comparison: Run the same video through different models and compare outputs instantly

The Full BibiGPT Capability Stack

Beyond multi-model switching, BibiGPT delivers a complete video knowledge workflow:

- 30+ platform coverage: YouTube summaries, Bilibili summaries, podcast summaries, TikTok, Xiaohongshu, and more

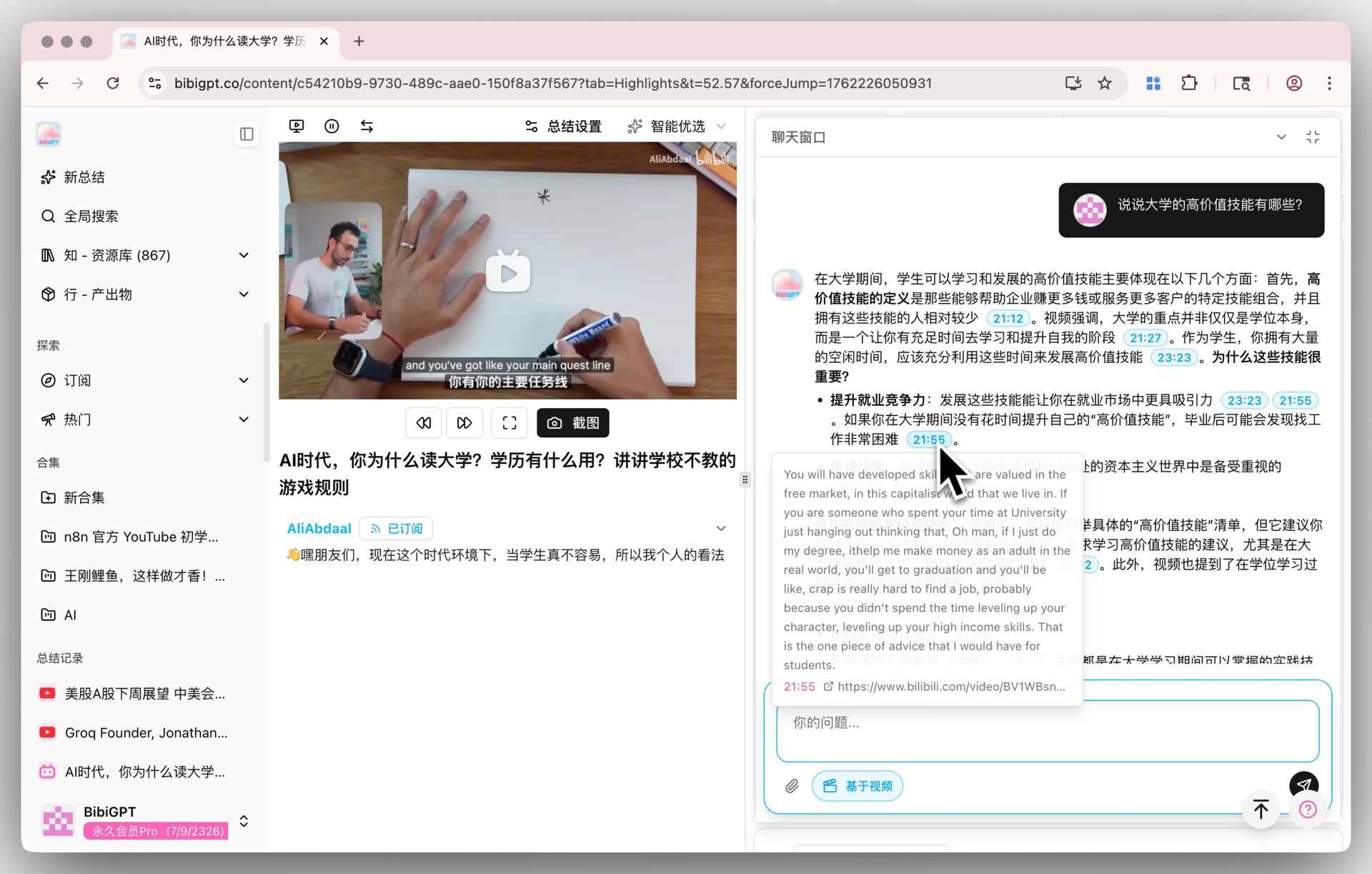

- AI dialog with source tracing: Ask questions about the video, get timestamped answers you can verify against the original

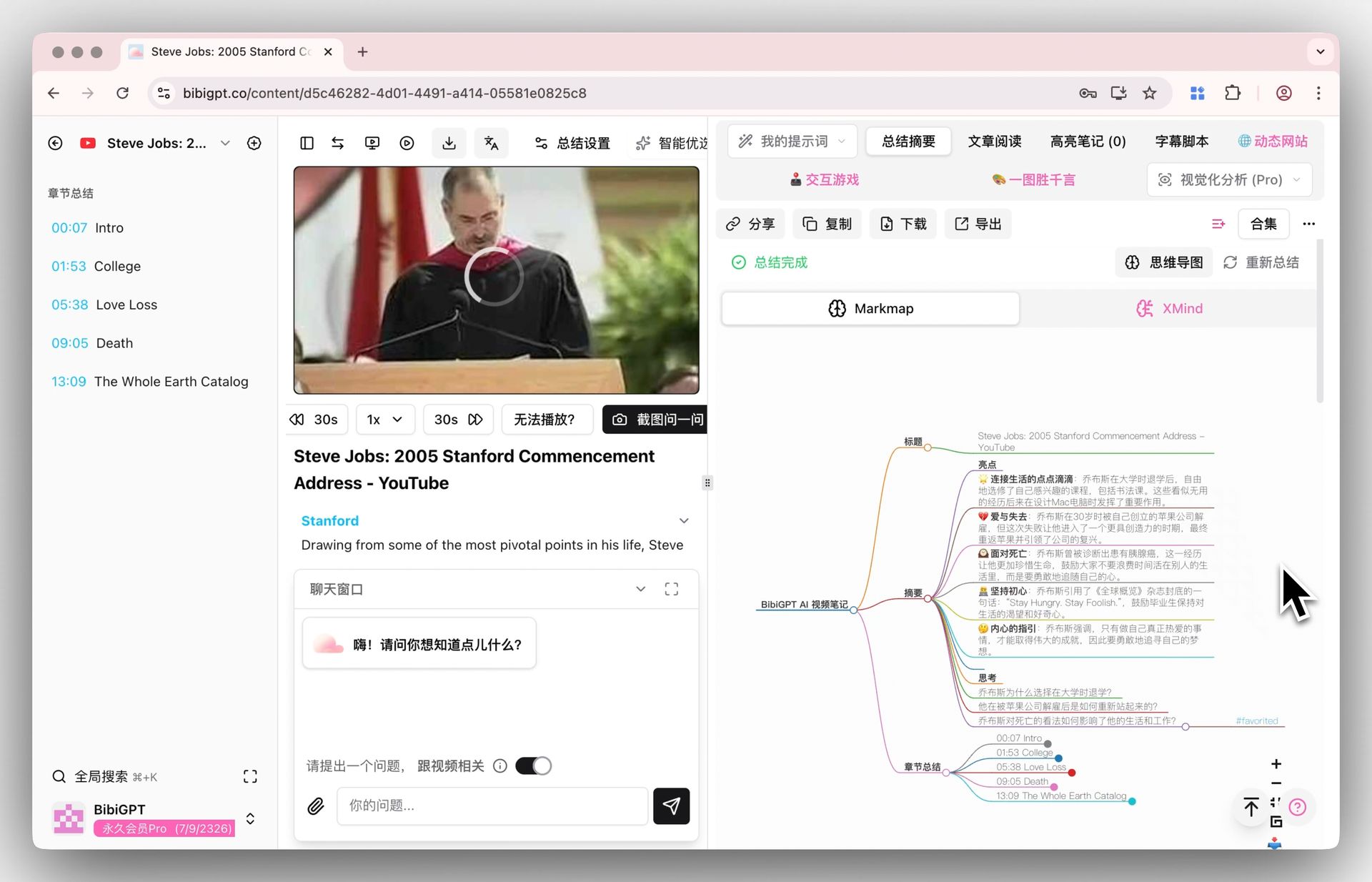

- Mind map generation: Auto-extract video structure into editable mind maps

- Multi-format output: Notes, articles, PPTs, and social media copy in one click

- Deep note integrations: One-click sync to Notion, Obsidian, and Readwise

AI video dialog tracing demo

AI video dialog tracing demo

Mind map display

Mind map display

See BibiGPT's AI Summary in Action

Let's build GPT: from scratch, in code, spelled out

Andrej Karpathy walks through building a tiny GPT in PyTorch — tokenizer, attention, transformer block, training loop.

Want to summarize your own videos?

BibiGPT supports YouTube, Bilibili, TikTok and 30+ platforms with one-click AI summaries

Try BibiGPT FreeStep-by-Step: How to Switch Models in BibiGPT

Follow these steps to summarize any video with the optimal AI engine in under 30 seconds.

Step 1: Paste your video link

Go to aitodo.co and paste the URL of the video you want to summarize. YouTube, Bilibili, TikTok, podcasts, and 30+ other platforms are supported.

Step 2: Choose your AI model

In the summary settings panel, you will see multiple available LLMs. Pick based on your scenario:

- Visual-heavy videos (vlogs, product reviews, cooking demos) → Gemini

- Long-form analysis (finance breakdowns, academic lectures, tech tutorials) → Claude

- Creative output (marketing scripts, social copy, content repurposing) → ChatGPT

Step 3: Generate and compare

Hit generate. Then switch to a different model and regenerate to compare outputs side by side. Pick the result that best fits your needs.

Step 4: Export and collaborate

Export your summary as Markdown or PDF, or sync directly to Notion/Obsidian. You can also use the AI video-to-article workflow to turn video content into publishable articles.

Pro tip: Not sure which model to pick? Start with the default engine. If the output feels shallow or misses visual details, try switching. After a few tries, you will develop an instinct for matching models to video types.

FAQ

Q1: Does multi-model switching in BibiGPT cost extra?

A: Multi-model switching is included in BibiGPT membership plans. Both Plus and Pro subscribers can access different LLMs. Check the features page for quota details and available models.

Q2: How do I know which AI model is best for my video?

A: As a rule of thumb, use Gemini for visual-heavy content (vlogs, demos), Claude for long spoken content (lectures, podcasts), and ChatGPT for creative tasks (marketing copy, social media). You can also try multiple models on the same video and compare results directly.

Q3: What platforms does BibiGPT support?

A: BibiGPT supports 30+ platforms including YouTube, Bilibili, TikTok, Xiaohongshu, WeChat Channels, podcasts, and Twitter/X. See the full list on the BibiGPT features page. You can also explore our YouTube summary feature and podcast summary feature for specific use cases.

Q4: How much better is multi-model switching compared to single-model tools?

A: It depends on the task. For visual-dense videos (travel vlogs, cooking tutorials), Gemini summaries are roughly 40% richer than generic single-model outputs. For 2-hour academic lectures, Claude produces noticeably more coherent logical flow. Multi-model switching ensures you always deploy the strongest engine for the job at hand.

Have feedback or ideas?

We value your input! If you encounter issues or have suggestions, please let us know anytime.

Submit feedbackConclusion

The AI video summarizer landscape in 2026 has entered a "model specialization" era. No single model wins everywhere — the right model depends on the task. For a broader look at how BibiGPT stacks up as an overall product, read our Best AI Audio & Video Summary Tool 2026 deep dive. BibiGPT is the only commercial video AI assistant that gives you the power to choose. Whether you are summarizing a visually rich vlog with Gemini, breaking down a dense finance lecture with Claude, or generating punchy marketing copy with ChatGPT, BibiGPT ensures you always use the best brain for the job.

Stop settling for one-size-fits-all AI. Start choosing the right model for every video.

Start your AI efficient learning journey now:

- 🌐 Official Website: https://aitodo.co

- 📱 Mobile Download: https://aitodo.co/app

- 💻 Desktop Download: https://aitodo.co/download/desktop

- ✨ Learn More Features: https://aitodo.co/features

BibiGPT Team