OpenClaw 250K GitHub Stars & Acquired by OpenAI: How BibiGPT AI Video Summary Wins in the Agent Era

OpenClaw broke GitHub's all-time fastest star growth record in 60 days. Its creator joined OpenAI to build agents for everyone. Here's how BibiGPT's AI video summarizer + bibigpt-skill completes your AI agent learning stack.

OpenClaw 250K GitHub Stars & Acquired by OpenAI: How BibiGPT AI Video Summary Wins in the Agent Era

In February 2026, the AI world was shaken by a single open-source project.

Peter Steinberger's OpenClaw — a self-hosted AI agent framework that lets AI models directly control your computer — accumulated 250,000 GitHub Stars in just 60 days, breaking the all-time fastest growth record previously held by React (which took 10 years to reach the same milestone).

OpenAI saw what was happening and acted: Steinberger joined OpenAI to work on "bringing agents to everyone." The message was clear — AI agents are no longer a developer toy. They're about to become everyday infrastructure.

For knowledge workers who learn through video content, this shift has a direct implication: your AI assistant is about to truly be able to "watch videos, extract insights, and build your knowledge base." BibiGPT is the critical piece that makes this possible.

What OpenClaw's Explosion Really Means: From "Chat AI" to "Action AI"

OpenClaw does one fundamental thing: it lets AI models take real actions on your computer instead of just answering questions.

- Automatically check, reply to, and archive emails

- Control browsers to fill forms, book appointments, and shop

- Execute scheduled "heartbeat tasks" every 30 minutes with zero human intervention

- Remote-control your Mac via Slack, WhatsApp, or Telegram

With 3,500+ community skills connecting it to ClickUp, GitHub, Figma, and smart home systems, OpenClaw represented a qualitative leap: from "AI that tells you what to do" to "AI that does it for you."

OpenAI's acquisition of its creator wasn't just talent acquisition — it was a declaration that AI agents are the next computing paradigm.

The Video Blind Spot That AI Agents Still Can't See

AI agents are now genuinely useful for collecting text, writing code, and calling APIs. But video remains an island — and an enormous one.

YouTube sees 500 hours of video uploaded every minute. Bilibili publishes millions of new videos every day. Podcasts, lectures, conference talks — the world's richest knowledge content exists locked in video form.

OpenClaw's native summarize skill handles YouTube via generic web scraping + LLM. But it can't touch:

- Bilibili (requires API authentication)

- Xiaohongshu (requires content parsing)

- Douyin/TikTok (requires short video format handling)

- Xiaoyuzhou / podcast platforms (requires audio transcription pipelines)

This is where bibigpt-skill comes in.

bibigpt-skill: Giving Your AI Agent Real Video Understanding

bibigpt-skill exposes BibiGPT's full video processing pipeline — built over years of deep integration with Chinese and global video platforms — as a standard CLI that any AI agent can call.

# Install bibigpt-skill in Claude Code environment

npx skills add JimmyLv/bibigpt-skill

# Then tell your agent:

# "Summarize this Bilibili video and extract the three main arguments"

# The agent automatically calls bibi summarize

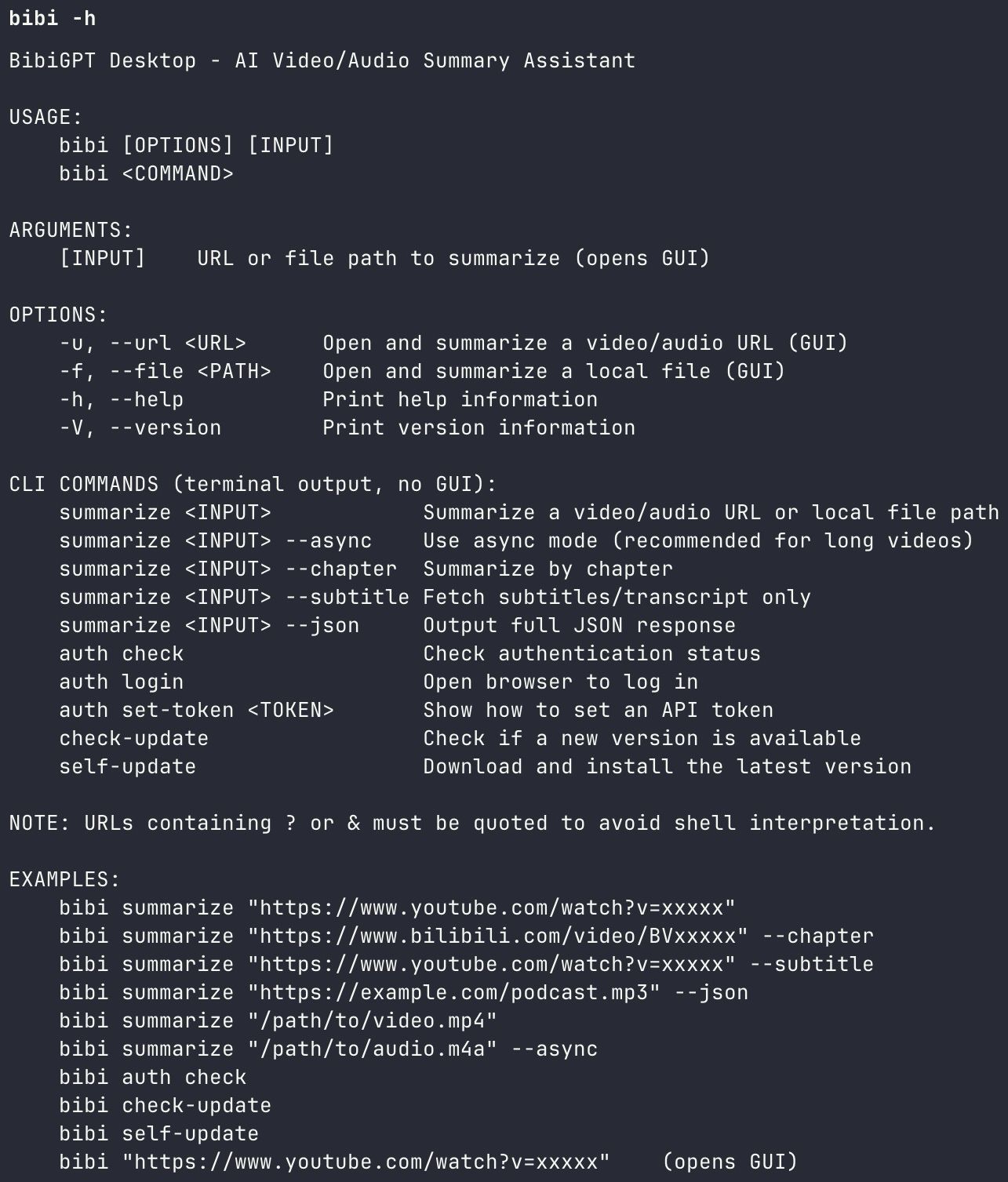

BibiGPT CLI and bibigpt-skill installation

BibiGPT CLI and bibigpt-skill installation

For the full comparison (bibigpt-skill vs. OpenClaw native summarize) and step-by-step setup guide, see: OpenClaw + BibiGPT Skill: AI Video Summary for Bilibili, Xiaohongshu & Douyin.

The 5-Step AI Agent Video Learning Pipeline

Here's how to build a personal video learning automation system using BibiGPT + OpenClaw (or Claude Code):

Step 1: Automated Collection — Let the Agent Monitor Your Sources

Configure an OpenClaw heartbeat task to run every morning:

[OpenClaw Heartbeat Task]

Daily at 8am:

1. Fetch latest videos from subscribed YouTube channels and Bilibili creators

2. Run: bibi summarize "<url>" --chapter --json for each new video

3. Generate a Markdown digest and push to Slack / email

You go from "waiting for videos to reach you" to "your agent actively harvests knowledge for you."

Step 2: Structured Summaries — Knowledge Chunks, Not Stream of Consciousness

The bibi summarize --chapter mode breaks videos into chapter-level knowledge blocks, each containing:

- Core argument (1-3 sentences)

- Key data points

- Timestamp anchors for jumping back to source

Research in cognitive science (Mayer's Multimedia Learning Theory, 2021) shows structured summaries improve information retention by approximately 40% compared to linear reading.

Step 3: Deep Comprehension — Mind Maps + Source-Traced Q&A

After the automated summary, real learning begins. BibiGPT provides two advanced tools:

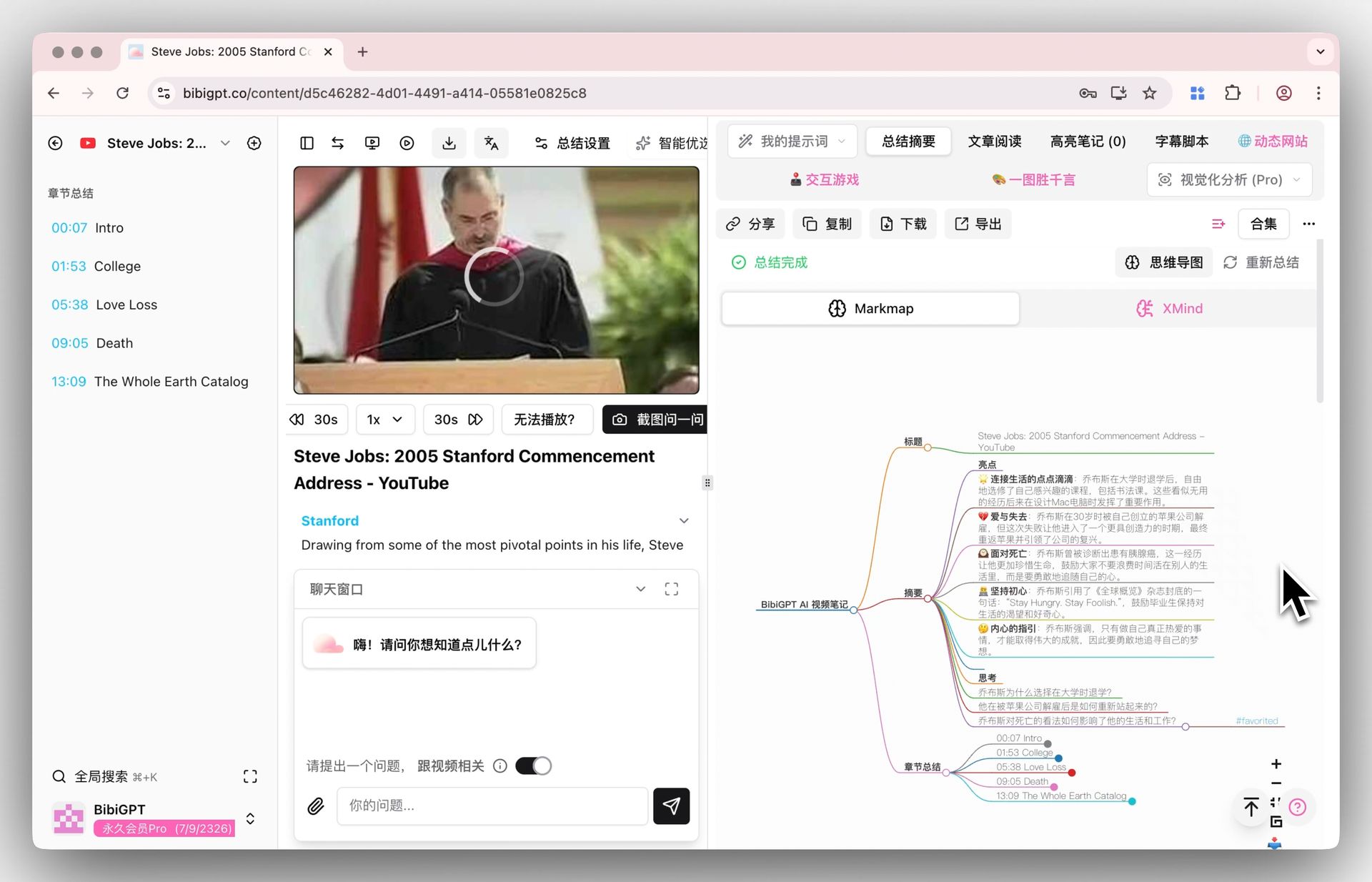

Inline Mind Map: Switch between text summary and XMind/Markmap view with one click — without leaving the page. The text summary gives you the details; the mind map gives you the structure.

BibiGPT inline mind map in XMind view

BibiGPT inline mind map in XMind view

AI Video Chat with Source Tracing: Ask questions about the video content in BibiGPT's chat window. Every answer comes with a clickable timestamp that jumps directly to the source clip in the video. Zero hallucination risk — every claim is traceable.

Step 4: Active Recall — Flashcards + Anki Spaced Repetition

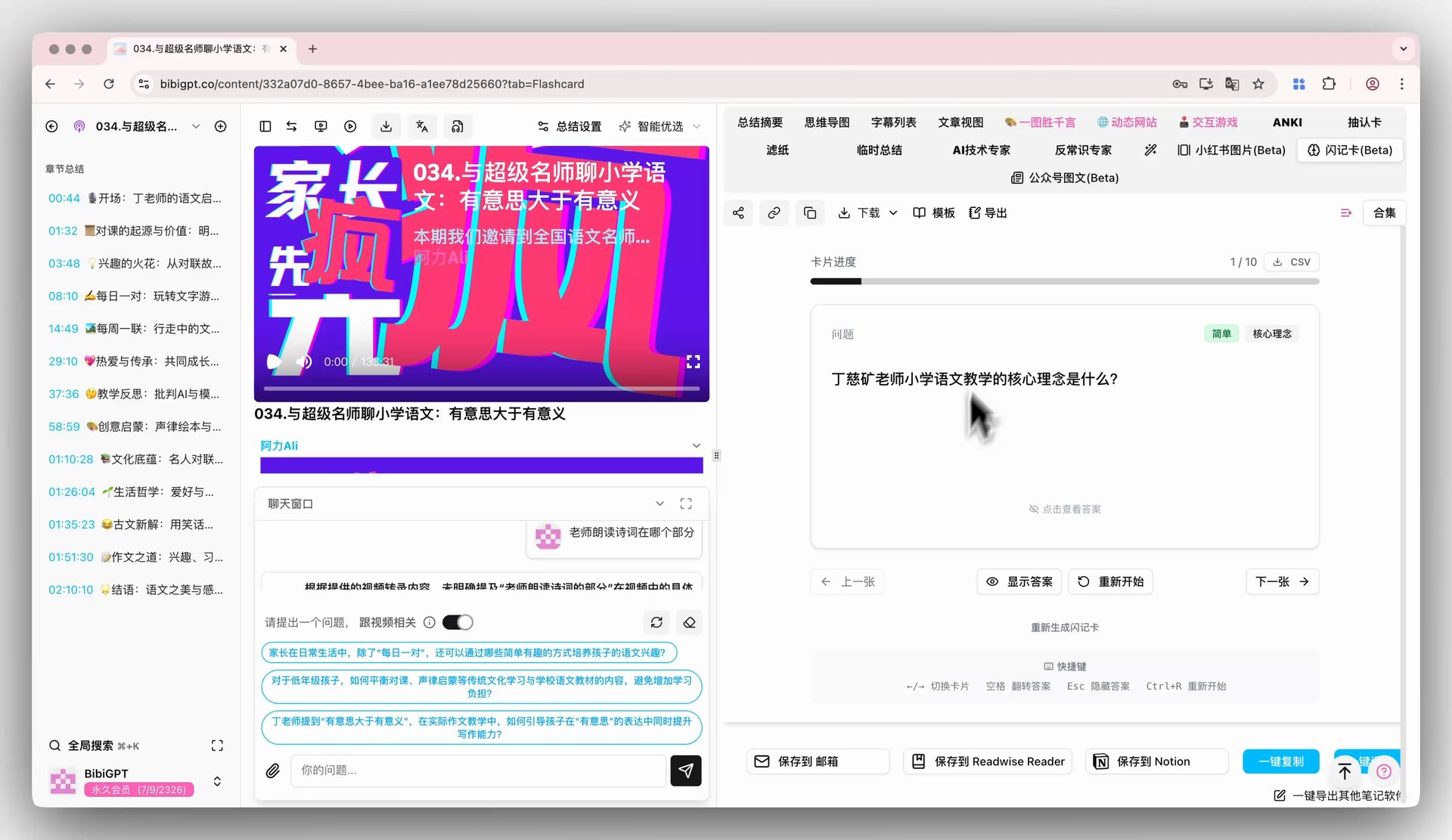

BibiGPT's Flashcard feature automatically converts video content into Q&A cards:

BibiGPT Flashcard: auto-generate Anki-ready cards from video

BibiGPT Flashcard: auto-generate Anki-ready cards from video

- Auto-generates Q&A cards from core concepts in the video

- Review interactively inside BibiGPT, rating difficulty per card

- Export as CSV with one click — import into Anki for spaced repetition

Spaced repetition is one of the most scientifically validated methods for long-term memory consolidation. Combined with BibiGPT's auto-generated flashcards, you stop "watching and forgetting" for good.

Step 5: Knowledge Output — Turn Understanding Into Shareable Insight

The highest form of learning is being able to teach it. BibiGPT's highlight notes + AI polish features help you convert video fragments into publishable knowledge:

- Select and highlight key passages from summaries

- Generate shareable image cards (Xiaohongshu covers, X/Twitter card format)

- Export to Notion, Obsidian, or Readwise — plug into your PKM system

Why Video Learning Is More Valuable Than Ever in the Agent Era

OpenClaw's explosion reveals a trend: AI agents are rapidly automating the "information collection and processing" layer of knowledge work. This means competitive advantage for knowledge workers will increasingly concentrate in deep comprehension and insight generation — not information acquisition volume.

Video is the world's most knowledge-dense content format — and the hardest for AI agents to handle automatically. People who can systematically extract learning from video will have a disproportionate information advantage in the AI agent era.

BibiGPT automates the "video → structured knowledge" conversion and opens it to the entire AI agent ecosystem through bibigpt-skill.

OpenAI has already moved to build the AI agent infrastructure layer. Now is the best time to build your own video learning automation workflow on top of it.

Get Started: Build Your AI Agent Video Learning Stack

# Step 1: Install BibiGPT Desktop (includes bibi CLI)

brew install --cask jimmylv/bibigpt/bibigpt # macOS

# Step 2: Install bibigpt-skill

npx skills add JimmyLv/bibigpt-skill

# Step 3: Verify

bibi auth check

Related resources:

- bibigpt-skill full tutorial: OpenClaw + Bilibili/Xiaohongshu support

- BibiGPT v4.263.2: OpenClaw AI Agent Skill, winget & More

- Feynman Technique + BibiGPT: 4-Step AI Video Learning System

Start your AI agent learning workflow with BibiGPT:

- 🌐 Website: https://aitodo.co

- 💻 Desktop download (includes bibi CLI): https://aitodo.co/download/desktop

- ✨ All features: https://aitodo.co/features

BibiGPT Team