How a Consultant Mined 50 YouTube Industry Interviews in One Week With BibiGPT (Case Study)

How a Consultant Mined 50 YouTube Industry Interviews in One Week With BibiGPT (Case Study)

Reflection from an interviewed independent consultant. “I” refers to the interviewee.

The bottleneck in industry research isn’t “finding sources” — it’s “digesting them.” When a client gave me one week to deliver an energy-storage report, I’d identified 50 YouTube industry interviews but no human can watch 50 hours of video in one work week. Here’s the actual BibiGPT workflow that got it done. Five working days, 60-page deliverable, A-grade client sign-off.

Background

I’m an independent consultant focused on hardtech and energy market entry. My clients are PE funds, industry conglomerates, government think tanks. They don’t want “lots of research” — they want “fast, deep, with judgment.”

End of March 2026, an energy storage project came in:

- Client: a top-tier PE fund

- Deadline: 5 working days

- Deliverable: 60-page deck covering tech roadmap, key players, business models, policy outlook, investment thesis

- Budget: under one week. Traditional method = 3 analysts × 2 weeks plus overtime.

The hard part: the high-quality primary information wasn’t in research papers — it was in industry interview videos. CEO podcast appearances, technical experts on conversations, panel discussions at industry conferences. I shortlisted 50 YouTube videos, each 30 minutes to 2 hours.

50 × 60 minutes (avg) = 50 hours. A work week is 40 hours.

Workflow Overview

| Day | Phase | What BibiGPT Did |

|---|---|---|

| Day 1 AM | Bulk ingest | Pasted all 50 YouTube links into BibiGPT, kicked off batch summarize queue |

| Day 1 PM | First-pass filter | Read 50 AI summaries, tagged for “original insight,” cut 18 |

| Day 2-3 | Deep mining | Added remaining 32 to a collection, used Collection AI Chat for cross-video Q&A |

| Day 4 AM | Highlight curation | Highlighted notes on the 12 core videos, organized by theme |

| Day 4 PM | Contrarian validation | Used Collection AI Chat for contradiction-finding prompts, surfaced 5 cross-video disagreements |

| Day 5 | Report writing | AI Video to Article + manual integration → 60-page deck |

Key Step Details

Day 1: Convert “watch 50 videos” Into “read 50 summaries”

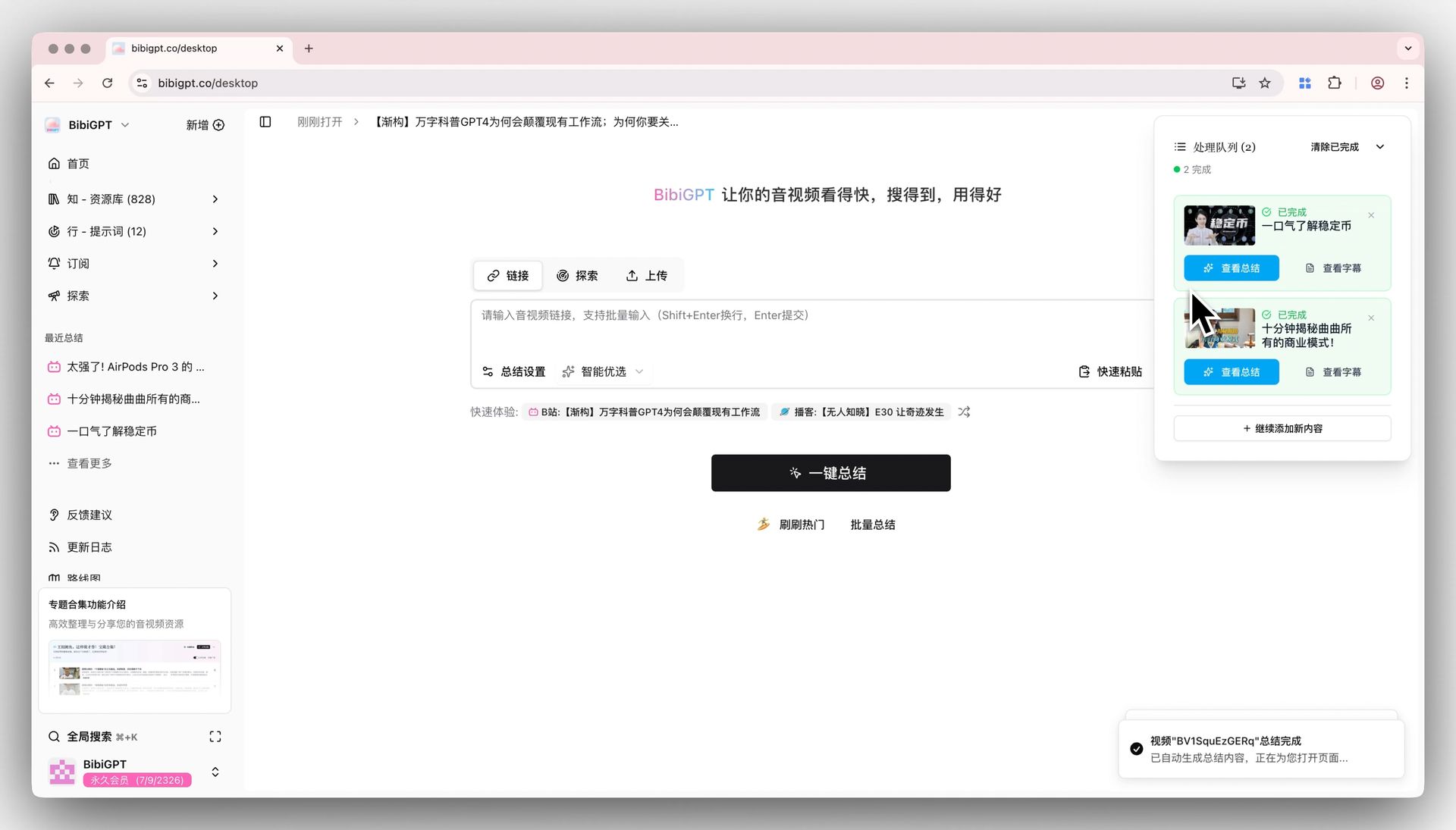

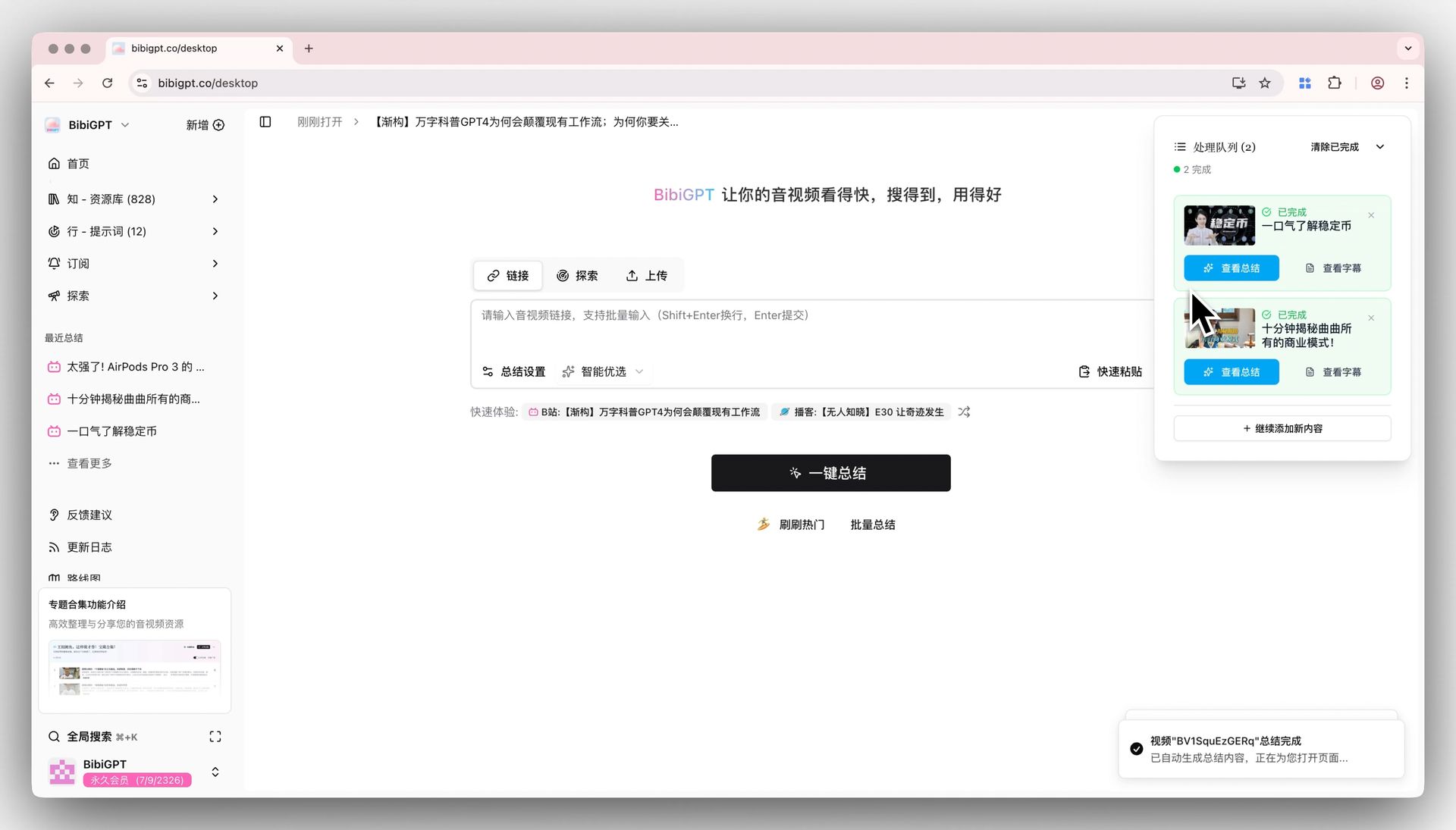

I went to BibiGPT’s home page, used multi-link batch summarize with Shift+Enter to paste all 50 YouTube links at once and queued the batch.

I went to lunch. Two hours later all 50 were processed — each with a structured Smart Deep Summary (key takeaways + thinking prompts + glossary + clickable timestamps).

In the afternoon, I read summaries — not videos. The triage rule:

- Summary mentions “proprietary data,” “insider perspective,” “non-consensus take” → keep

- Summary reads like industry boilerplate → cut

50 → 32 candidates after the first pass.

Day 2-3: Collection AI Chat replaces “watching everything”

Added the 32 to one collection (“Energy Storage 2026Q1 Interviews”), opened Collection AI Chat. This is the linchpin step. Instead of opening each video, I just asked.

Sample queries:

- “Across these 32 videos, how many distinct camps exist on energy storage business models? Who’s the spokesperson for each camp?”

- “Did interviewees mention the biggest 2026 policy uncertainty? Which videos talked about it?”

- “On tech roadmap, which is mentioned more — LFP or sodium-ion batteries? How does sentiment distribute?”

Every answer came with citations — I could click straight to the timestamp in the source video. This step compressed “consume 32 videos” into “ask + read AI synthesis + spot-check key citations.”

Day 4: Highlight Notes + Contrarian Validation

The collection chat surfaced 12 must-watch videos — the ones with proprietary data or non-consensus takes. I watched those 12 with Highlight Notes on, then sorted highlights by theme inside BibiGPT.

In the afternoon I did something traditional consulting research rarely does — contrarian validation. I asked Collection AI Chat:

- “If I want to argue ‘energy storage is in PE’s sweet spot for the next 3 years,’ what counter-evidence exists across these 32 videos?”

- “Which interviewee’s view is most challenged by others? What’s the core of the disagreement?”

AI surfaced 5 cross-video contradictions. Those became the heart of the “Risk Factors” section in the final deck. The client specifically called out that section as standout work.

Day 5: From Notes to a 60-Page Deck

The last day was pure writing. I grouped highlight notes by deck section, used AI Video to Article to convert key segments from the 12 core videos into structured text, and pasted into my PPT template.

Final outputs:

- 32 structured video summaries (~80,000 chars)

- 12 highlighted core videos (~12,000 chars)

- 5 cross-video contradiction points

- 60-page PPT deck

Numbers (vs Traditional)

| Dimension | Traditional | BibiGPT Workflow |

|---|---|---|

| Video consumption | 50 hours (full speed) / 25 hours (2x) | 2 hours (batch) + 4 hours (core 12) = 6 hours |

| Cross-video synthesis | Nearly impossible / human memory | Native via Collection AI Chat |

| Contrarian validation | Rarely done | 1 hour |

| Total time | 3 analysts × 2 weeks | 1 consultant × 1 week |

| Source traceability | Notebook / Word docs | Timestamp-level citations |

I didn’t outsource everything to AI. Core judgment, client context, narrative arc — those are mine. BibiGPT digested the mechanical part — watching videos — so I could spend my time on judgment and storytelling. That’s the difference between this workflow and “let AI write the report.”

Who Else Can Reuse This Workflow

If any of this fits, you can copy the playbook directly:

| You are | Pain | Value of this workflow |

|---|---|---|

| Independent consultant / industry researcher | One-week deliverables, can’t digest sources fast enough | 50 hours → 6 hours |

| Sell-side / buy-side equity analyst | Must consume earnings call recordings during reporting season | Batch summarize + Collection chat |

| Industry fund investor | Need fast judgment on a sector | Cross-interview synthesis |

| Corporate strategy / BD | Tracking competitor public interviews | Long-running collection, periodic queries |

| Academic researcher | Interviews/conference videos beyond papers | Multi-video citation tracing |

Try It

- New here → Try BibiGPT, drop your current 5 candidate videos in

- Existing user → create a collection for your active research topic and try Collection AI Chat

- Heavy researcher → pair with Cubox or Obsidian for permanent highlight storage

FAQ

Q1: Doesn’t “batch summarize” eat through credits?

A: BibiGPT memberships are billed per summary count. 50 videos in one go fits comfortably inside a Plus monthly allowance (specifics on the membership page). For “project-bursty heavy use” like consulting, top-up packs or the Pro tier are a better fit.

Q2: Can I trust AI summaries enough to base judgment on them?

A: Two-layer validation: layer 1, AI summarizes and Collection AI Chat synthesizes — compresses density to digestible. Layer 2, I personally watch the 12 core videos to confirm key calls. Fast and safe. If a client is sensitive about a specific number, I jump back to the timestamp citation and verbatim-check — BibiGPT’s citation makes this fast.

Q3: Interview content vs interviewee intent — how does AI handle context drift?

A: AI doesn’t fully — that’s why the contrarian validation step matters. I deliberately ask Collection AI Chat to surface contradictions between interviewees; those contradictions usually live in context-sensitive territory. AI gives me the candidate list, judgment is mine.

Q4: Are clients OK with you using AI for research?

A: I don’t proactively announce “I used AI,” but if asked, I’m transparent — AI handles mechanical work (watching, note-taking), judgment and narrative are mine. Clients care about quality and speed, not tools. This client signed off A-grade and asked “how did you go this deep this fast?”

Q5: Does the same approach work beyond YouTube — podcasts / Bilibili / WeChat Channels?

A: Yes. BibiGPT supports 30+ platforms, including Bilibili, Xiaoyuzhou, Ximalaya, Douyin, WeChat Channels. For China-market research I lean heavily on Bilibili + Xiaoyuzhou — especially conference recordings and deep-dive podcasts.

BibiGPT Team