Bilibili AI Video Summarizer: BibiGPT + Feynman Technique for Systematic Course Learning

Feynman Technique × Bilibili AI video summary tool — BibiGPT flashcard one-click Anki CSV export for spaced repetition, collection AI chat for cross-episode series Q&A, UP主 subscription for automatic new video tracking — turning Bilibili's "watch-and-save" dead loop into a quantifiable systematic learning system.

Bilibili AI Video Summarizer: BibiGPT + Feynman Technique for Systematic Course Learning

Quick answer: How to systematically learn a Bilibili tutorial series with BibiGPT? Three-step core workflow: ①Subscribe to UP主, new videos auto-enter processing queue; ②add series videos to collection and use Collection AI Chat for cross-episode questions ("How does the concept in episode 3 relate to episode 8?"); ③export flashcards as Anki CSV to convert Bilibili knowledge into long-term spaced-repetition memory assets.

The four-step Feynman framework is covered in the YouTube overview article. This article focuses on Bilibili's unique challenges for series learning.

Bilibili's Hidden Learning Trap: Danmaku Creates the Illusion of Understanding

Bilibili is the highest-quality Chinese knowledge content platform. But danmaku (scrolling comments) creates a unique learning trap: when you see "I get it!" and "aha!" floating across the screen, your brain generates a feeling of understanding — but you only absorbed others' understanding, not your own.

Neuroscience research on mirror neurons (Rizzolatti et al.) shows that observing others display understanding activates similar brain regions as actual understanding — but doesn't transfer actual knowledge.

Bilibili also presents a cross-episode fragmentation problem in series courses: you're watching episode 8, a concept appears that connects to episode 3, but you can't remember what episode 3 said, and have no efficient way to find it.

BibiGPT solves both of these Bilibili-specific learning obstacles.

Bilibili Feynman Three Steps: From Series Learning to Long-Term Memory

Step 1: Subscribe to UP主 — Auto-Track, Miss Nothing

On any video summary page in BibiGPT, click "Subscribe" to add the creator to your subscription list. Every new video they publish automatically enters BibiGPT's processing queue — you only need to check your subscription list periodically, no manual link pasting needed.

BibiGPT UP主 channel subscription

BibiGPT UP主 channel subscription

This is especially important for Bilibili's long-term series: following a course is like following a show, and BibiGPT tracks the summaries so you can focus on learning.

Step 2: Collection AI Chat — Cross-Episode Q&A to Build Series Knowledge Graph

Add the series videos to a BibiGPT collection, then enter Collection AI Chat mode:

Cross-episode questioning:

- "What's the fundamental difference between the 'recursion' in episode 3 and 'tail recursion' in episode 8?"

- "Which concept in this entire series is the foundation for everything else?"

- "Does the UP主's description of 'complexity analysis' in episode 5 and episode 12 contradict or progressively deepen?"

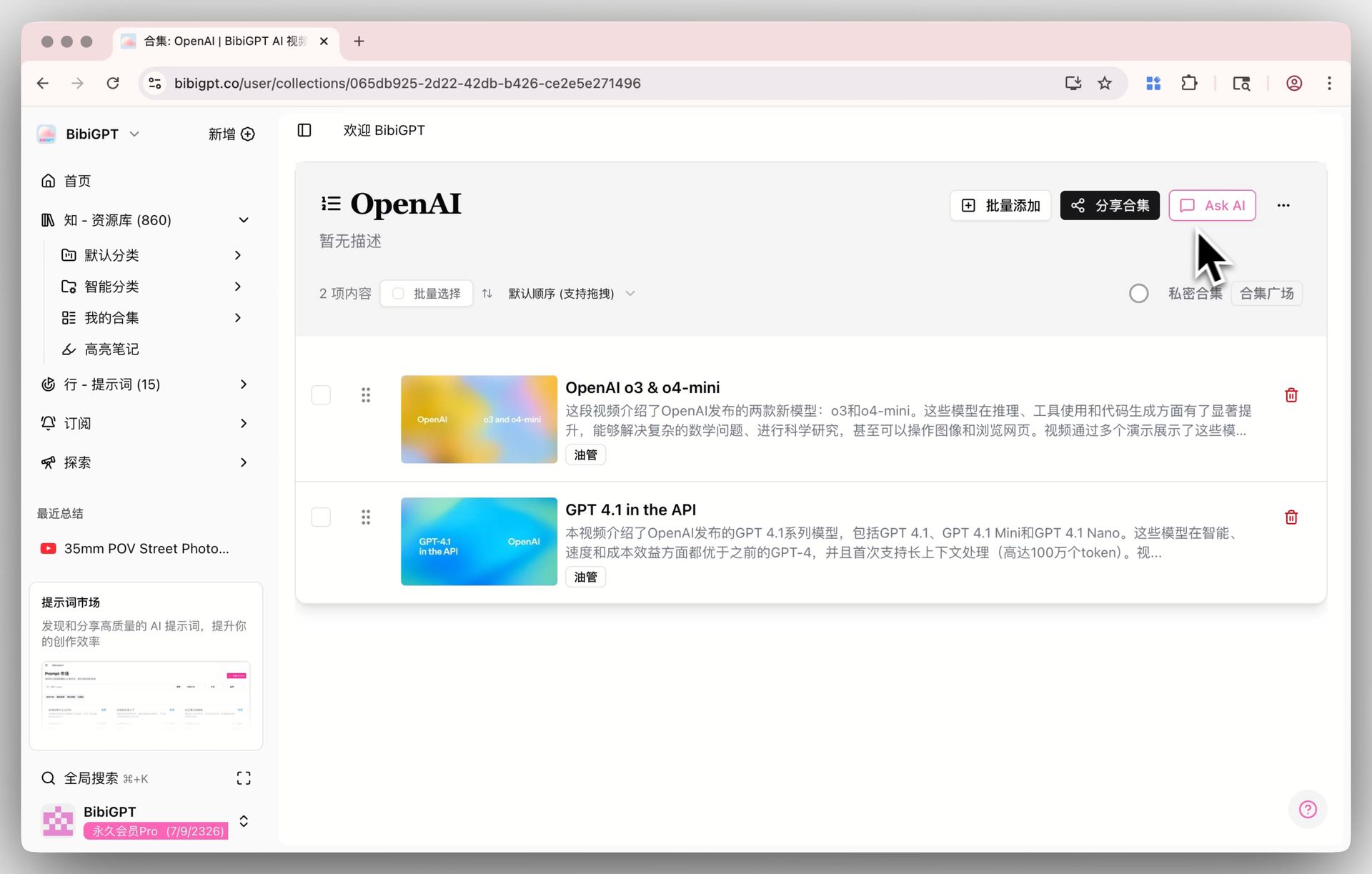

BibiGPT Collection AI Chat entry

BibiGPT Collection AI Chat entry

AI integrates all series content to give cross-episode synthesized answers — something impossible when looking at individual episode summaries.

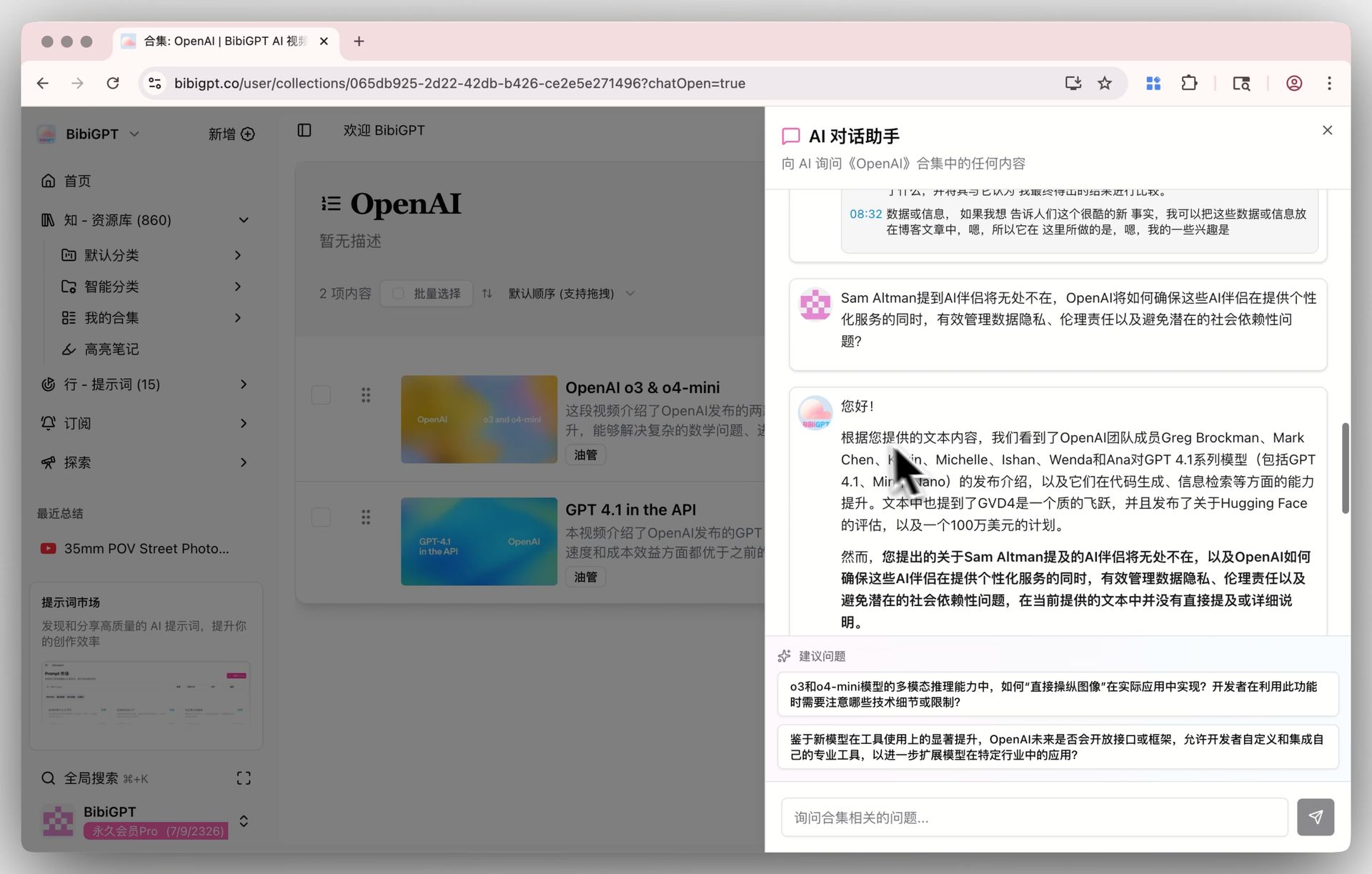

BibiGPT Collection AI Chat: cross-episode Q&A

BibiGPT Collection AI Chat: cross-episode Q&A

Step 3: Anki CSV Export — Convert Bilibili Learning into Long-Term Memory Assets

This is Bilibili's exclusive core tool in the Feynman series — flashcard Anki CSV export.

For each episode, generate BibiGPT flashcards:

- BibiGPT auto-extracts Q&A cards from video content

- Interactive flip-card review: question front, answer back

- Click "Export CSV" — one-click export in Anki-compatible format

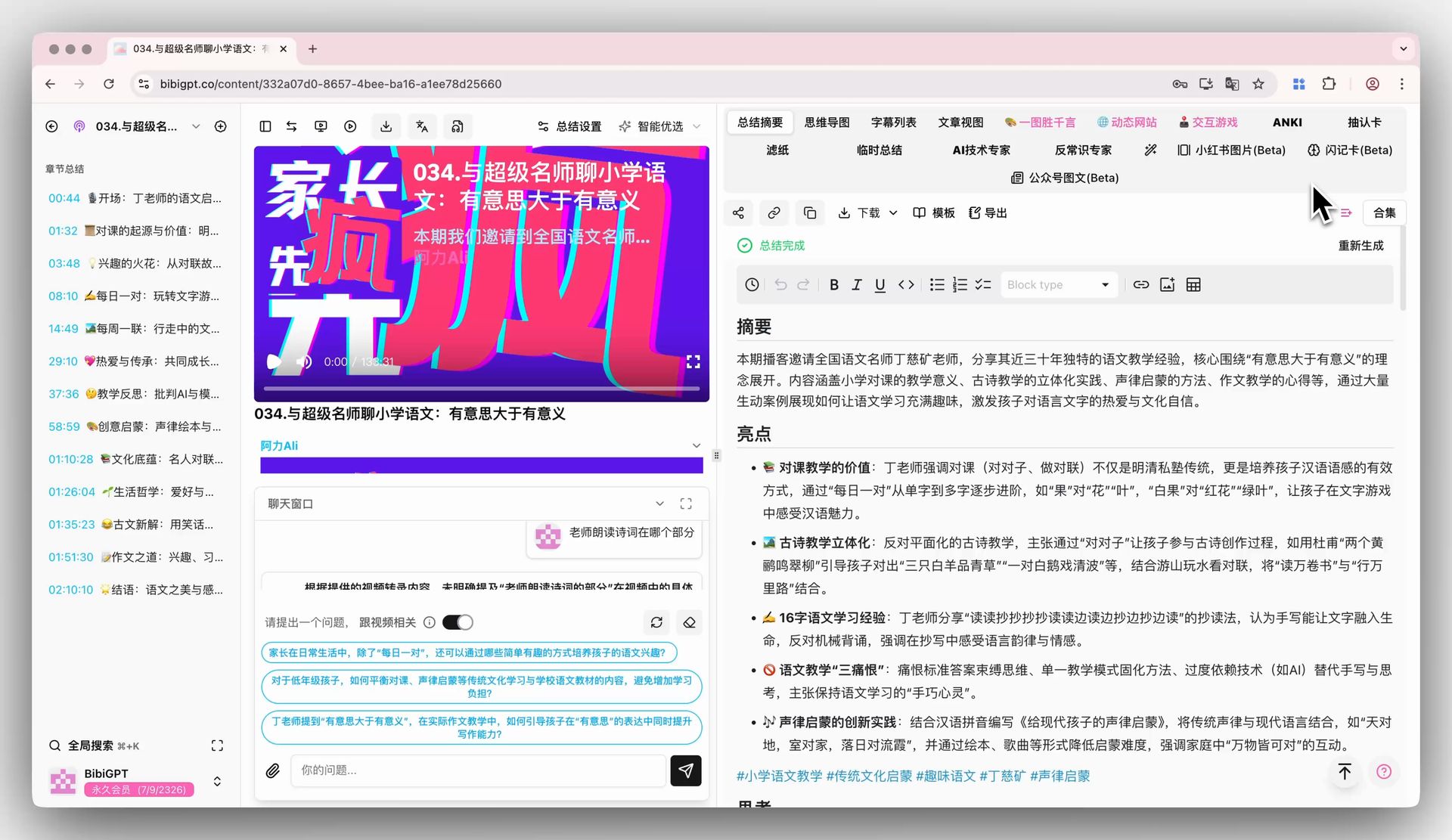

BibiGPT flashcard: click flashcard tab

BibiGPT flashcard: click flashcard tab

BibiGPT flashcard: export to CSV option

BibiGPT flashcard: export to CSV option

After importing into Anki, spaced repetition algorithms push review reminders just before you would forget a concept. This is the correct way to "permanently store" Bilibili knowledge — not re-watching videos.

Bilibili-Exclusive: Using Danmaku as Feynman Prompts

Danmaku, used correctly, is an excellent source of Feynman gap signals:

- See danmaku: "But what about when XXX condition applies?" → This is a gap worth exploring

- Ask BibiGPT: "Under what assumptions does the UP主's conclusion hold? What about the XXX case mentioned in comments?"

- Use AI's explanation to verify real understanding of boundary conditions

Danmaku shifts from "others' understanding projection" to "your own questioning starting point."

Case Study: Feynman + BibiGPT for Bilibili Python Data Structures Series

25-episode Python Data Structures & Algorithms series:

Setup (once):

- Subscribe to UP主 → first 10 episodes auto-processed

- Create "Python Data Structures" collection

Per-episode workflow (15 min each):

- BibiGPT summary → identify Feynman target (e.g., "understand LIFO")

- Attempt explanation → can't clearly distinguish from queue

- Ask AI → understanding deepens

- Generate flashcards → export to Anki

After episode 10 (series midpoint): 5. Collection AI Chat → "What are the core data structures in episodes 1-10? How do they relate?" 6. AI generates cross-episode knowledge map: array → linked list → stack → queue → hash table

Course completion:

- Anki deck: 25 episodes × ~8 cards = ~200 Q&A cards

- Measurable test: can you explain why hash collision affects query efficiency without watching the video?

Feynman × BibiGPT Series

- Full Feynman framework: YouTube overview article

- Podcast commute learning: Xiaoyuzhou + 45-min commute workflow

- Xiaohongshu: Batch collection to build knowledge system from fragments

- TED Talks: Video-to-article + speech framework extraction

- Douyin: Search-driven learning vs. algorithm recommendations

Start your AI efficient learning journey now:

- 🌐 Official Website: https://aitodo.co

- 📱 Mobile Download: https://aitodo.co/app

- 💻 Desktop Download: https://aitodo.co/download/desktop

- ✨ Learn More Features: https://aitodo.co/features

BibiGPT Team